From Detection to Remediation: DeepWaste AI Adds Quantified Savings and Implementation Guidance

On February 27, 2026, PointFive announced DeepWaste™ AI, highlighting quantified savings estimates and implementation guidance to move AI efficiency from detection to remediation. The product’s differentiator, PointFive says, is not only finding inefficiency across the full AI execution stack, but translating those findings into quantified remediation steps that align with how engineering and FinOps teams operate.

Why “Visibility” Isn’t Enough in Production AI

Production AI introduces a multi-layered cost and performance system. Waste can emerge from model selection, token consumption, routing logic, caching behavior, GPU utilization, retry patterns, and data platform orchestration. Many organizations can see the effects, spend rising, latency drifting, budgets tightening, but struggle to connect those symptoms to actionable changes across teams.

PointFive’s message is that traditional cloud optimization tools weren’t built to analyze AI-specific execution behavior across these layers. DeepWaste AI aims to close the gap between identifying inefficiency and implementing change, turning optimization into a continuous discipline rather than a set of dashboards.

Agentless Deployment to Reduce Friction

PointFive says DeepWaste AI connects directly to cloud APIs, LLM service metrics, GPU telemetry, and billing systems without agents, instrumentation, or code changes. By default, optimization runs using metadata, billing signals, performance metrics, and resource configuration data without requiring raw inference logs. This design is meant to speed adoption while preserving privacy and minimizing data access requirements.

Organizations that want deeper evaluation can enable optional inference-level analysis to assess prompt architecture and orchestration logic. Customers control the depth of analysis, allowing teams to adapt the module to policy requirements.

The Scope: LLM Services, GPUs, and Data Platforms

DeepWaste AI provides native connectivity across AWS (Bedrock, SageMaker, and AI managed services), Azure (Azure OpenAI, Azure ML, Cognitive Services), and GCP (Vertex AI and AI services), plus OpenAI and Anthropic direct APIs. The module also continuously optimizes GPU infrastructure by identifying underutilized or idle GPUs, instance-type mismatches, OS and driver misconfigurations, and hardware-to-workload misalignment.

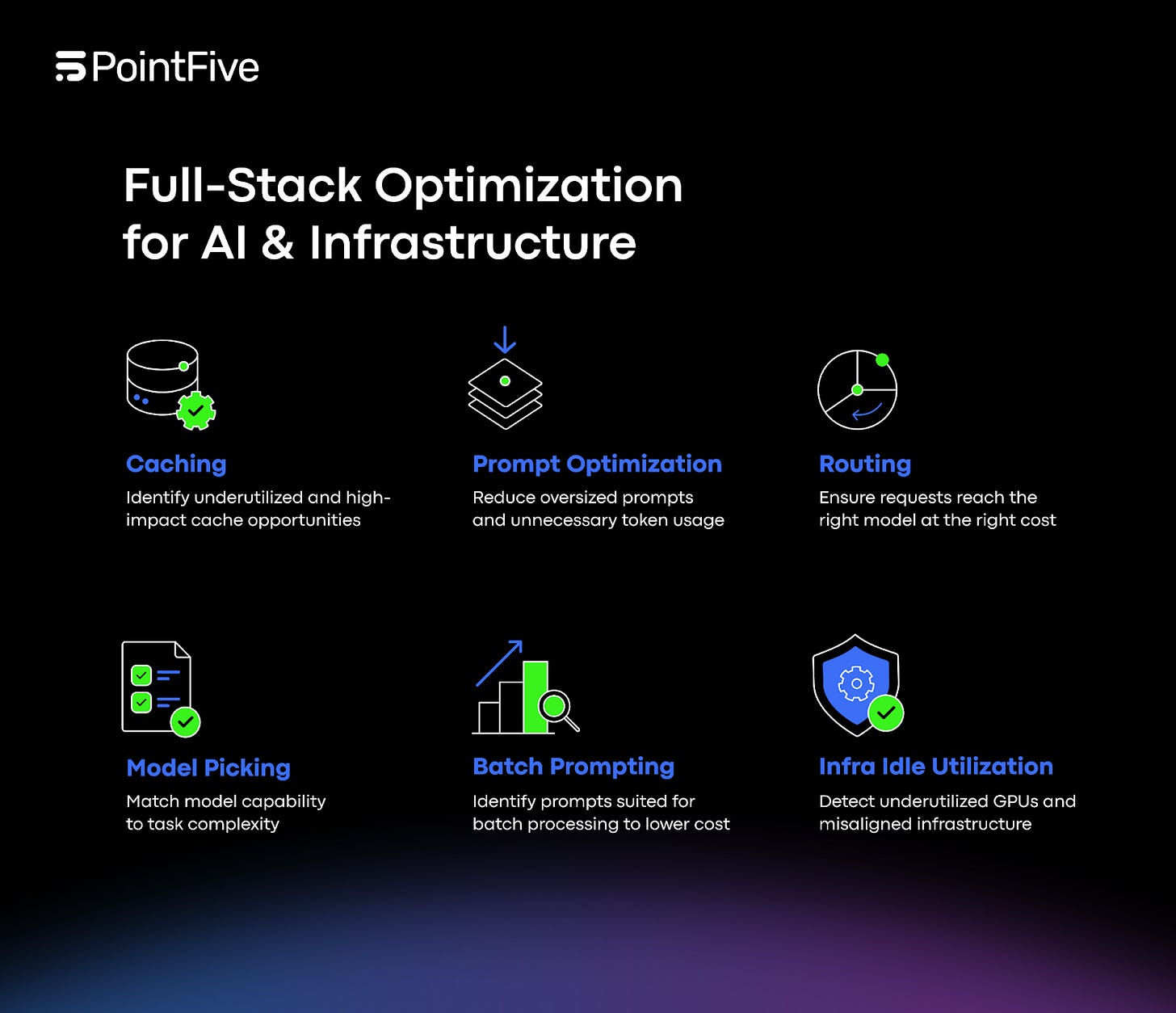

On the data side, native support for Snowflake and Databricks extends optimization across AI data platforms from data ingestion through inference. PointFive frames the combined coverage as “full-stack” optimization rather than inference-only visibility.

Findings Structured for Action

DeepWaste AI structures and enriches every invocation with task classification, routing context, cost attribution, and infrastructure alignment signals. It detects inefficiency across four layers: Model & Routing Intelligence, Token & Prompt Economics, Caching & Reuse Optimization, and Infrastructure & Operational Leakage. PointFive says each detection is grounded in unified workload signals rather than surface-level billing anomalies, producing a behavioral understanding of how AI services operate and where efficiency can be improved.

Quantified Savings as a Decision Tool

PointFive emphasizes that DeepWaste AI attaches a quantified savings estimate to every finding. That estimate is intended to help teams make tradeoffs in the way they actually work: deciding which engineering changes to prioritize, which infrastructure adjustments to schedule, and which optimizations to validate first. Rather than treating efficiency as a general aspiration, DeepWaste AI is positioned to make it measurable, with projected outcomes defined upfront.

Implementation Guidance Mapped to Workflows

Alongside savings estimates, PointFive says each finding includes clear implementation guidance, with recommendations prioritized by financial impact and mapped directly to engineering and FinOps workflows. This mapping is a key part of the product narrative. AI efficiency work often falls between teams: engineering owns application logic and routing, FinOps tracks spend, platform teams manage GPUs, and data teams run pipelines. DeepWaste AI is positioned to provide a shared set of signals and actions that connect these responsibilities across the stack.

Tracking Improvements Over Time

PointFive’s positioning goes beyond “identify and fix.” DeepWaste AI is meant to help teams evaluate projected savings before acting and track realized improvements over time. In other words, optimization becomes a continuous practice: detect inefficiency, prioritize by impact, implement changes, and measure the result. PointFive frames this as transforming AI efficiency from reactive cost monitoring into a continuous, measurable discipline across models, infrastructure, and data platforms.

Efficiency Without Sacrificing Control

“AI workloads introduce a new category of operational complexity,” said Alon Arvatz, CEO of PointFive. “DeepWaste AI gives organizations the intelligence required to scale AI efficiently, across models, infrastructure, and data platforms, without sacrificing control.”

DeepWaste AI is now available to PointFive customers.